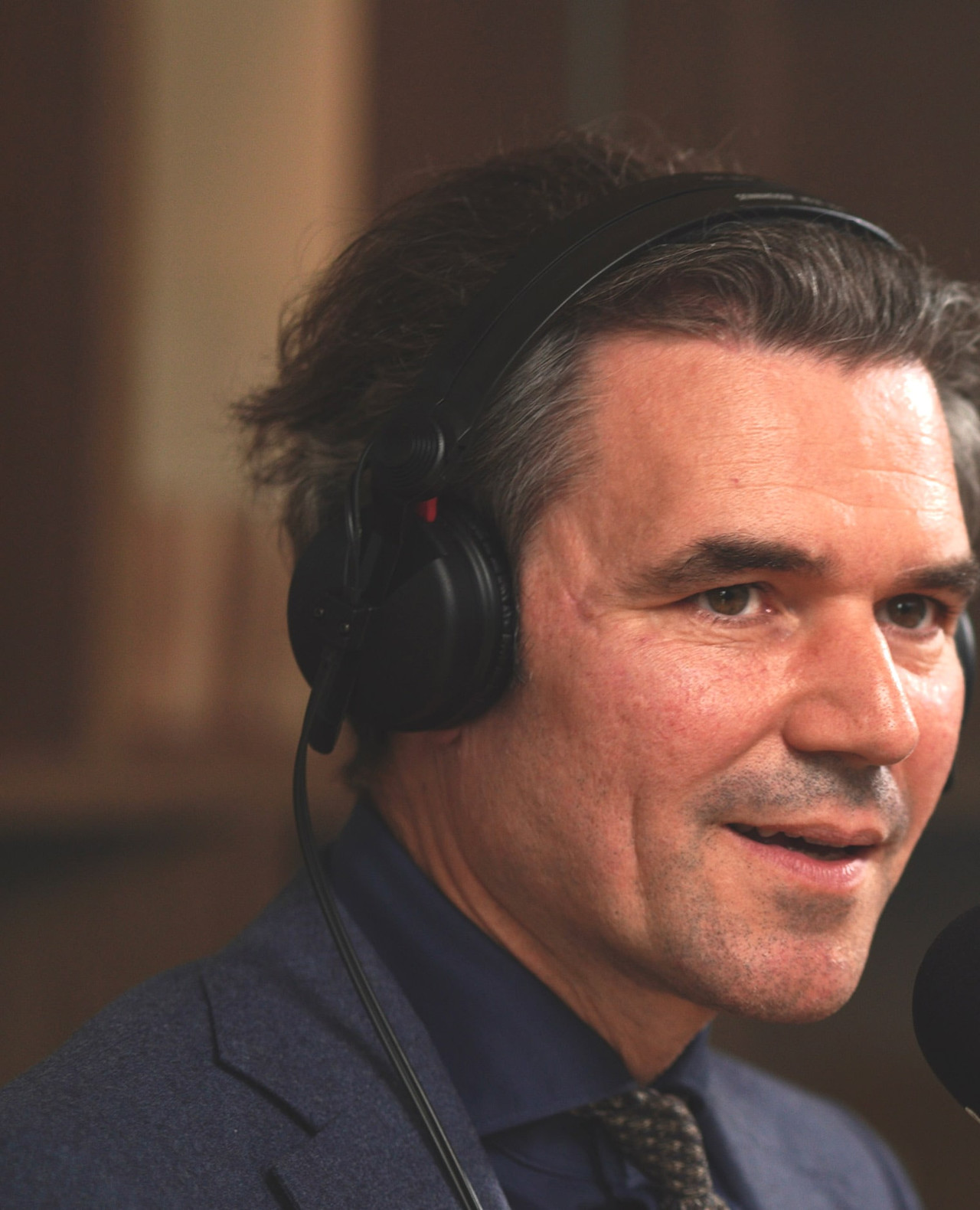

Our insights

Understanding where markets are going, and the impact that has, is the hardest thing for every investment professional to do. It takes robust research and insight to develop the best understanding, and that’s why we put a lot of effort into it. Because only by sharing knowledge can we all prosper.